Abu Dhabi’s TII launches Falcon AI models that see and read

Falcon Perception outperforms Meta’s SAM 3 on key benchmarks - research paper

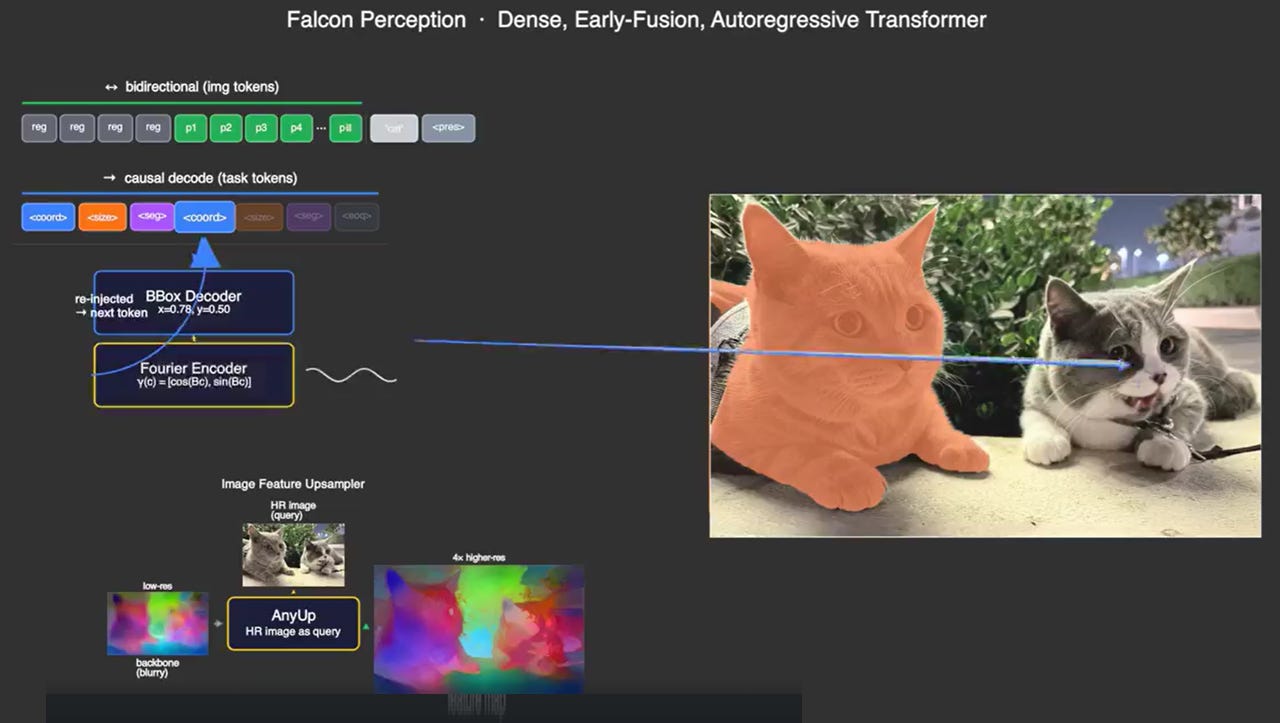

#UAE #R&D - Technology Innovation Institute (TII), the global applied research centre of Advanced Technology Research Council (ATRC), has released two open-source AI vision models: Falcon Perception and Falcon OCR. The new models are designed to identify, segment and read objects in images using natural language instructions. Built on a single early-fusion Transformer architecture, the models challenge the conventional multi-stage pipeline approach that has dominated computer vision research. According to the researchers, Falcon Perception outperforms Meta’s SAM 3 on mask quality benchmarks, while Falcon OCR delivers strong document reading accuracy at just 300 million parameters.

SO WHAT? – Most AI vision systems rely on separate modules for seeing and understanding; a design that adds complexity and limits how well image and language features can interact. TII’s early-fusion approach collapses those capabilities into a single architecture, and the benchmark results suggest it works. The fact that a 0.6 billion parameter model can match or beat systems up to 15 times its size should catch the attention of anyone building vision AI for real-world deployment.

Here are some key facts about the new Falcon Vision models:

Technology Innovation Institute (TII) has released Falcon Perception and Falcon OCR as open-source models, targeting dense image segmentation and document text recognition respectively. Both vision models are built on the same early-fusion Transformer design, where image and text tokens interact from the very first processing layer rather than being handled separately.

Falcon Perception scores 68.0 Macro-F1 on the SA-Co benchmark, compared to 62.3 for Meta’s SAM 3 . Gains are particularly strong in food and drink (70.3 vs 58.1), sports (75.2 vs 71.2), and attribute recognition (79.3 vs 71.1) categories.

TII also introduces PBench, a new diagnostic benchmark designed to test AI vision models on compositional prompts, covering objects, attributes, text-reading, spatial constraints, and relations. It includes a Dense split for crowded, complex scenes where existing benchmarks have largely saturated.

On PBench, Falcon Perception scores 57.0 average Macro-F1, ahead of SAM 3 at 44.4 and Qwen3-VL-30B at 52.7. On the Dense split specifically, the gap widens to 72.6 versus 8.9 against the much larger Qwen3-VL-30B model.

Despite having just 0.6 billion parameters, Falcon Perception outperforms Moondream2 (2B) and Moondream3 (9B) across most complex reasoning tasks, and matches or beats Qwen3-VL-8B on spatial and relational tasks, making it between 3 and 15 times more parameter-efficient than the models it beats.

Falcon OCR reaches 80.3% accuracy on the olmOCR benchmark and 88.64 overall on OmniDocBench, at just 300 million parameters. Running on a single Nvidia A100-80GB GPU, it processes 5,825 tokens per second (or roughly 2.9 images per second) in a standard serving configuration.

The architecture introduces a “Chain-of-Perception” approach, where each segmentation instance is resolved in sequence: position first, then size, then the pixel mask. This ordering gives the model conditioning context before producing spatial outputs, improving accuracy in crowded scenes.

TII acknowledges one current limitation: presence calibration, where the model must correctly identify when a described object is absent from an image. Early tests applying reinforcement learning to address this have already yielded an 8-point improvement, with further work planned.

All models, code, benchmarks and a public playground are available to the community, and TII is actively soliciting feedback from both researchers and practitioners on where the models succeed and where they fall short.

Research team members include: Phúc L. Sofian Chaybouti, Aviraj Bevli, Ngoc Dung Huynh, Sanath Narayan, Ankit Singh, Wamiq Reyaz, Hakim Hacid and Yasser Dahou.

[Written and edited with the assistance of AI]

LINKS

Falcon Vision Playground (website)

Falcon Vision research paper (arXiv)

Falcon Perception model (Hugging Face)

Falcon Perception code (GitHub)

Falcon OCR model (Hugging Face)

PBench data set (Hugging Face)