MBZUAI researchers advance medical AI, cut AI training costs

MediX-R1 outperforms models three times its size on clinical benchmarks

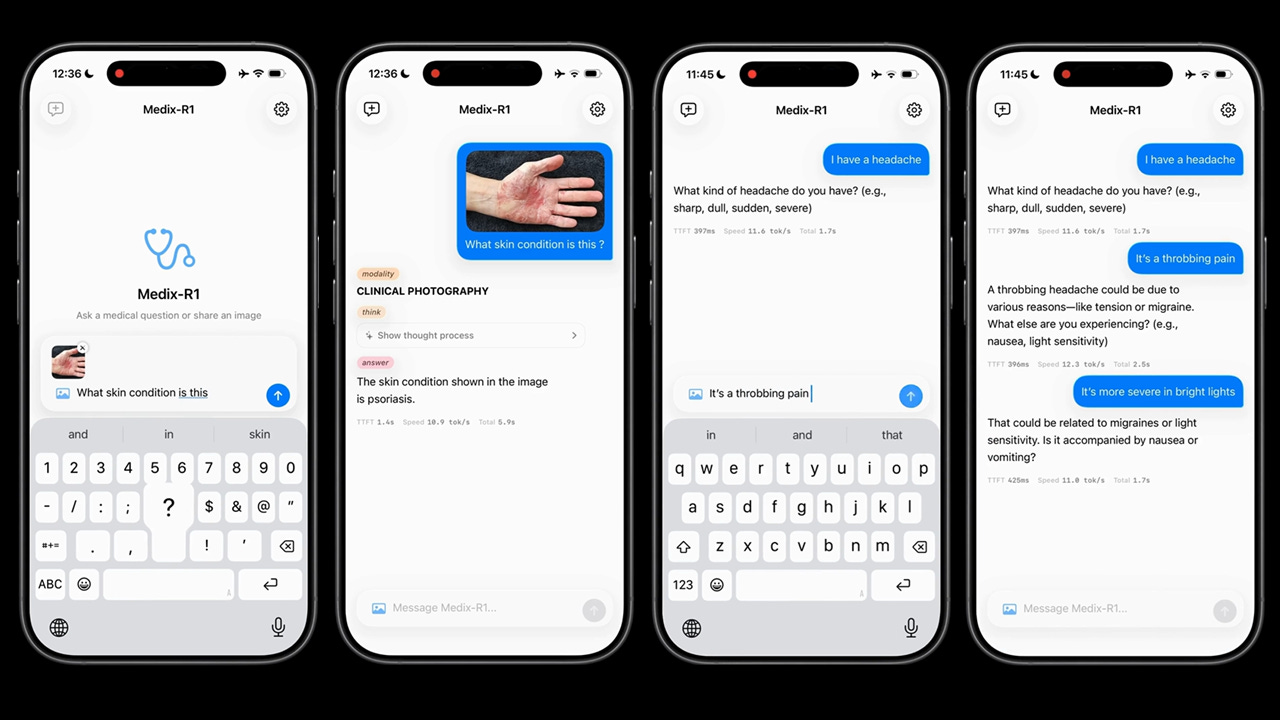

#UAE #LLMs – Researchers at Abu Dhabi-based global AI university Mohamed bin Zayed University of Artificial Intelligence (MBZUAI), in collaboration with medical doctors from India, has introduced MediX-R1, an open-source multimodal medical AI model that applies reinforcement learning to enable free-form clinical reasoning across 16 medical imaging modalities. Supported by an NVIDIA Academic Grant and an MBZUAI-IITD Research Collaboration Seed Grant, the research advances the state of medical AI by moving beyond multiple-choice outputs toward practical, open-ended clinical responses. MediX-R1 has already achieved some impressive results, with a benchmark average of 73.6 percent across 17 medical datasets and 95.1 percent accuracy on the US Medical Licensing Examination. The model was also chosen as preferred by doctors in 72.7 percent of blind expert reviews.

SO WHAT? – Most medical AI models are trained and evaluated on multiple-choice question formats, which bear little resemblance to the open-ended, contextual reasoning that clinical practice actually demands. MediX-R1 is significant because it demonstrates that reinforcement learning (RL) with a carefully designed composite reward system can produce reliable, free-form clinical reasoning without requiring large volumes of costly human-annotated training data. The breakthrough could meaningfully lower the barrier to developing high-quality medical AI in resource-constrained settings.

Here are some key points from the MediX research:

Researchers from Abu Dhabi’s Mohamed bin Zayed University of Artificial Intelligence (MBZUAI) have released MediX-R1, a fully open-source multimodal medical AI model trained using reinforcement learning to produce open-ended clinical responses, moving beyond the multiple-choice formats that dominate most existing medical AI systems.

The research was conducted in collaboration with medical doctors from India and supported by an NVIDIA Academic Grant and an MBZUAI-IITD Research Collaboration Seed Grant,

The MediX-R1 model is available in 2 billion, 8 billion and 30 billion parameter variants, with the 2 billion parameter version capable of running locally on a mobile device without internet connectivity or server GPU requirements, broadening potential access in lower-resource healthcare settings.

The model achieves a benchmark average of 73.6% across 17 medical datasets, scoring 95.1% on the US Medical Licensing Examination and earning doctor preference in 72.7% of blind expert reviews, demonstrating strong real-world clinical reasoning capability.

MediX-R1’s 8 billion parameter variant outperforms Google’s MedGemma-27B model despite being approximately three times smaller, highlighting the efficiency gains delivered by the team’s composite reinforcement learning reward approach.

The model was trained on only approximately 51,000 instruction examples, a remarkably lean dataset by medical AI standards, made possible by a composite reward framework that eliminates the need for large-scale, costly clinical reasoning annotations.

MediX-R1 supports over 16 medical imaging modalities including X-ray, CT (Computed Tomography), MRI, ultrasound, microscopy, histopathology, endoscopy, mammography, and optical coherence tomography, making it one of the most broadly capable open-source medical vision-language models available.

A novel composite reward framework is central to MediX-R1’s training approach, combining an LLM-based accuracy signal, a medical embedding-based semantic reward, a format reward and a modality recognition reward to stabilise reinforcement learning and prevent reward hacking.

MediX-R1 is a research prototype and is not intended for clinical or commercial deployment at this stage, with the authors noting risks including potential hallucination of medical findings and the need for further fairness analysis and clinician-in-the-loop evaluation before any real-world clinical use.

The project is fully open-sourced under a CC-BY-NC-SA 4.0 licence, with model weights, training code, evaluation framework and datasets all publicly available, supporting transparency and enabling the broader research community to build on the work.

The research project team includes: Sahal Shaji Mullappilly (MBZUAI), Mohammed Irfan Kurpath (MBZUAI), Omair Mohamed (Jubilee Mission Medical College and Research Institute); Mohamed Zidan (JJM Medical College); Fahad Khan (MBZUAI); Salman Khan (MBZUAI); Rao Anwer (MBZUAI), Hisham Cholakkal (MBZUAI).

ZOOM OUT – The stakes for medical AI research of this kind are considerable. Generative AI in healthcare is forecast to grow into a $21.7 billion global market by 2032, according to Precedence Research, driven in large part by demand for AI assistants serving clinicians, healthcare administrators, insurers and patients. Medical large language and vision-language models are already being deployed for clinical question answering, triage support, report drafting and education. Yet the sector sets an exceptionally low tolerance for error, making model training and fine-tuning a resource-intensive and technically demanding process. A central obstacle has been that most training pipelines remain tailored to multiple-choice question formats, which fail to reflect the open-ended, contextually nuanced reasoning that real clinical practice requires. MediX-R1's contribution is to demonstrate a practical and data-efficient path beyond that constraint.

[Written and edited with the assistance of AI]

LINKS

MediX-R1 microsite (MBZUAI)

MediX-R1 demo site (MBZUAI)

MediX research paper (arXiv)

MediX-R1 data (Hugging Face)

MediX-R1 code (Github)

Leaderboard and evaluation suite (MBZUAI)

Read more about clinical LLM research:

Saudi to launch world’s largest AI physician clinical trial (Middle East AI News)

M42 releases MEDIC leaderboard for clinical LLMs (Middle East AI News)

M42 delivers framework for evaluating clinical LLMs (Middle East AI News)

M42 releases new versions of clinical LLM (Middle East AI News)